The Supervisor Challenge

Imagine a plant shutdown event. Sarah, a supervisor, is responsible for 10 inspectors, each of whom completes 10 inspections per day. That leaves Sarah reviewing 100 inspection reports daily. She must identify critical findings, ensure they are escalated appropriately, and confirm that everything meets compliance standards. With each review taking roughly two minutes, more than 3 hours a day are spent combing through reports. Over a busy week, that time commitment can become unmanageable.

Enter AI. With an AI-assisted prioritisation model, data is automatically reviewed and interpreted, flagging only those reports that truly warrant the supervisor’s attention. Instead of poring over 100 entire documents, Sarah might only need to assess 2 high-risk ones in detail — reducing her review load from about 200 minutes to just 4 minutes. She can now spend that extra time on more meaningful tasks: developing an action plan for pressing issues or coordinating with other departments.

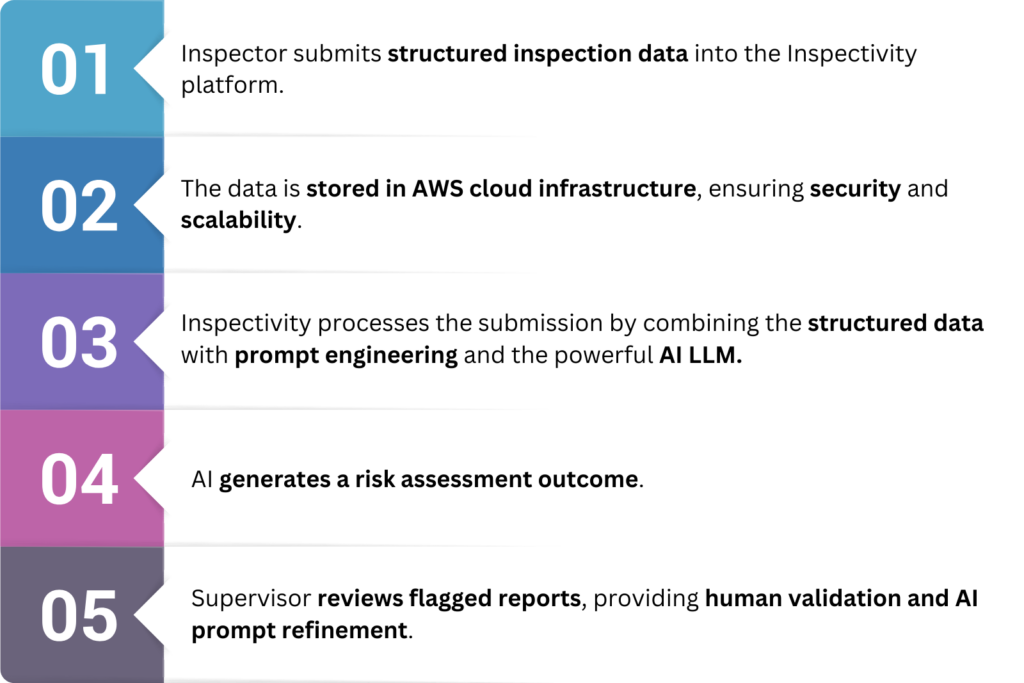

But how does AI-based prioritisation actually work?

Consider Inspectivity, a cloud-based inspection management platform. It captures structured data (text, images, response fields, and severity indicators), which can then be passed to a Large Language Model (LLM). With a well-crafted prompt — the instructions given to the LLM — the AI “understands” what constitutes a high-risk finding. It returns a summary that highlights the most urgent inspections, possibly using a traffic light system (e.g., green for no issues, yellow for minor concerns, red for critical). The supervisor no longer needs to do blanket reviews. Instead, she can confidently home in on the red flags and swiftly take action.

As the hype around AI subsides, companies should put a heightened focus on practical applications that empower employees in their daily jobs.

Understanding AI for Inspection Management

This article aims to:

- Explain the core components of AI and how they can fit into inspection management.

- Demonstrate how real-world professionals can harness AI to reduce inefficiencies.

- Demystify AI by breaking down technical concepts into accessible insights, inspiring inspectors and engineers to discover other innovative applications in their workflows.

While AI’s technical foundations — like LLMs, cloud infrastructure, and prompt engineering — are gradually becoming better understood, many industrial teams still struggle to define concrete business use cases. As one McKinsey insight states, “The big question is how business leaders can deploy capital and steer their organizations closer to AI maturity“. Our supervisor’s story illustrates precisely the type of real-world scenario to which AI can bring immediate value. By exploring this example, we hope to trigger new ideas for practical implementations within engineering and inspection environments.

Moreover, the emerging concept of Explainable AI (XAI) emphasises transparency in how AI models arrive at conclusions — particularly relevant in inspections, where compliance and safety standards demand rigorous traceability. Explaining AI’s decision-making process can be challenging. Yet, in the high-stakes environments of asset integrity and industrial inspections, it is vital: “In a McKinsey survey of the state of AI in 2024, 40 percent of respondents identified explainability as a key risk in adopting generative AI.” XAI practices help build trust, making AI an auditable partner instead of a black box. By learning how data and prompts shape AI outcomes, teams can validate outputs and mitigate potential risks like hallucinations or bias.

Ultimately, our article is intended to demystify AI for front-line inspectors, site supervisors, engineering managers, and anyone else who sees the potential in AI but needs clarity on how to deploy it responsibly.

The Building Blocks of Generative AI

At the heart of modern AI are five essential components that, when combined correctly, can revolutionise inspection management:

- Large Language Models (LLMs) An LLM is an advanced AI system that uses massive data sets to generate human-like text. It can “predict” the next word in a sequence, enable tasks like summarisation, Q&A, and risk assessment. Well-known models include ChatGPT (OpenAI), Gemini (Google), Claude (Anthropic), and Llama (Meta). Although these models don’t think like humans, they can process enormous textual inputs quickly and with surprising nuance.

- High-Quality Structured Data AI is only as good as the data it consumes. If critical inspection data is trapped in PDFs, spreadsheets, or text-based forms with no standard labeling, the model struggles to produce accurate, focused results. Inspectivity addresses this by guiding users to collect structured inspection details — dates, numeric readings, images, standard references, etc. — thus providing a robust data foundation that AI can confidently interpret.

- Cloud Infrastructure (AWS Models) Processing large AI workloads demands scalable and secure cloud environments. Inspectivity, for instance, leverages Amazon Web Services (AWS) to ensure reliability, security, and the computational muscle needed for real-time analysis. This infrastructure also ensures global accessibility and rapid deployment across remote worksites.

- Prompt Engineering How you “ask” the AI a question (the “prompt”) can dramatically affect the outcome. In inspection use cases, prompts might consist of (1) the underlying data (like inspection readings and images), (2) a specific question (e.g., “Which of these findings is most critical under standard X?”), and (3) instructions on how to structure the response. Well-crafted prompts yield focused, actionable insights. Poorly formed prompts cause confusion or irrelevant answers.

- AI Guardrails & Security Enterprises need robust procedures to secure confidential inspection data and prevent unauthorised access or “data leakage”. AI guardrails also safeguard the reliability of generated outputs, filtering out spurious or biased conclusions. A well-managed AI pipeline ensures that each client’s data is compartmentalised, reinforcing trust and regulatory compliance.

With these five components in place, organisations can continuously improve their AI outputs. Form-building, classification, and data refinement can be iterated upon, ensuring each version of AI-driven inspection gets smarter and more aligned with real-world needs.

AI Is Only as Good as the Data It’s Trained On

The truism “garbage in, garbage out” applies sharply to AI. For generative models to deliver meaningful analysis of inspection activities, the data must be clean, consistent, and contextualised.

- Structured, High-Quality Data When an inspector captures a photo of a corroded valve and tags it with a severity rating in Inspectivity, that data becomes a rich, labeled resource. Over time, the model learns how to spot “valve corrosion” as a potential high-risk condition. But if the inspector simply attaches unlabeled PDF documents or images with no context, the AI’s ability to interpret that content correctly deteriorates.

- Standardisation and Contextualisation In inspection management, AI-driven risk prioritisation depends on letting the model know what “critical” actually means. If “A1” on your form is a top-level fault category, the AI must have that definition. Otherwise, it might mistakenly downplay an urgent problem. By embedding relevant engineering standards and risk guidelines in the prompt, you teach the model to highlight truly high-risk issues.

- Overcoming Unstructured PDFs A major roadblock is unstructured legacy data. Many organisations rely on PDF forms, paper checklists, or spreadsheets that lack consistent formatting. Converting these into a machine-readable structure can be time-consuming. Yet this step is essential if you want AI to deliver accurate insights. Delaying digitisation only makes the final AI adoption more expensive and complicated.

For instance, consider how Inspectivity forms can be configured to systematically capture known risk parameters. Suppose you have critical checks for pressure vessels: a single inspection template might contain numeric fields for internal pressure, date of last hydro test, visual images, and issue severity. By digitally standardising these fields, the LLM can be told exactly which inputs to weigh most heavily for risk analysis.

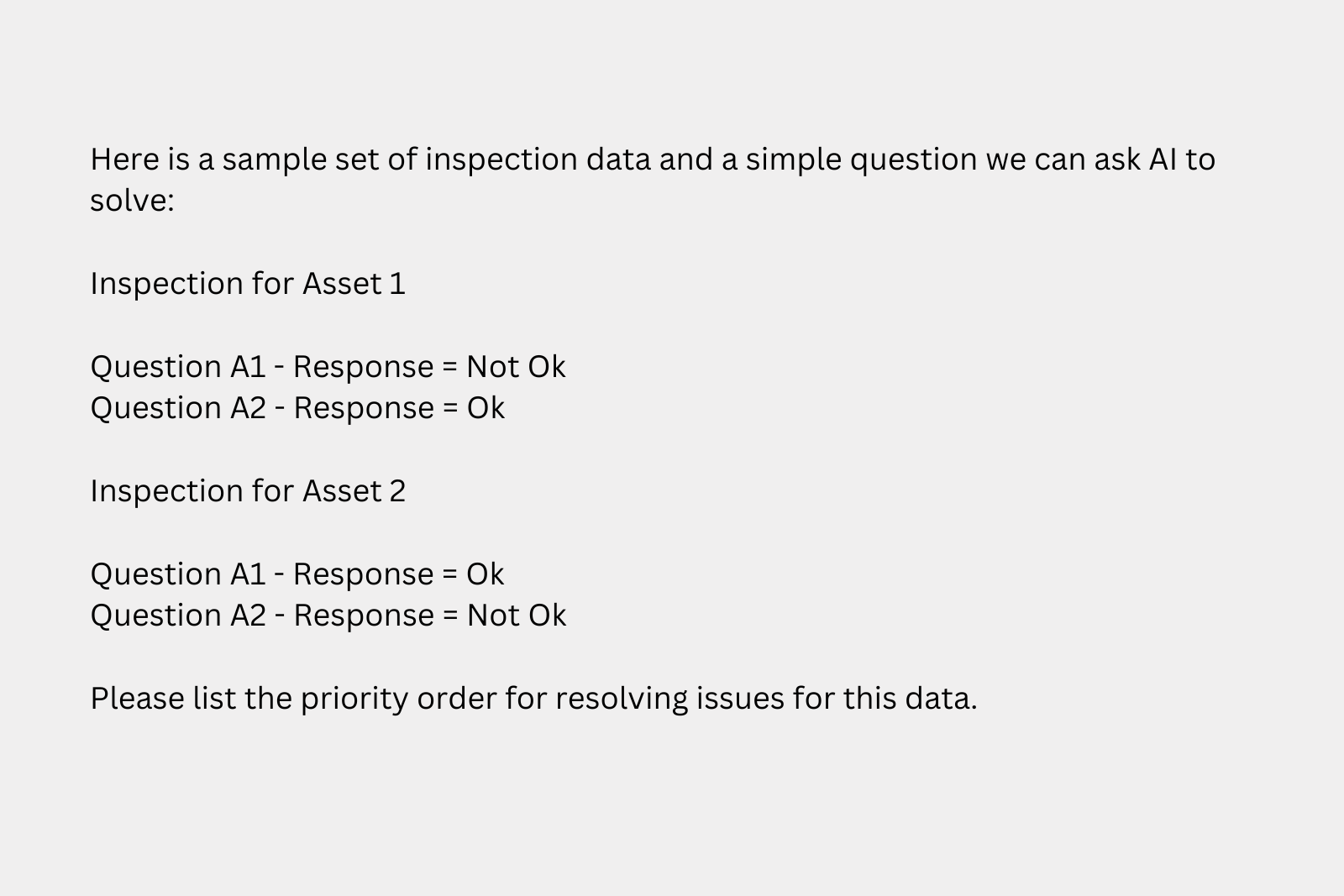

Prompt engineering refines this further by explicitly telling the AI which issues are more critical. For instance, consider the scenario in Screenshot 1:

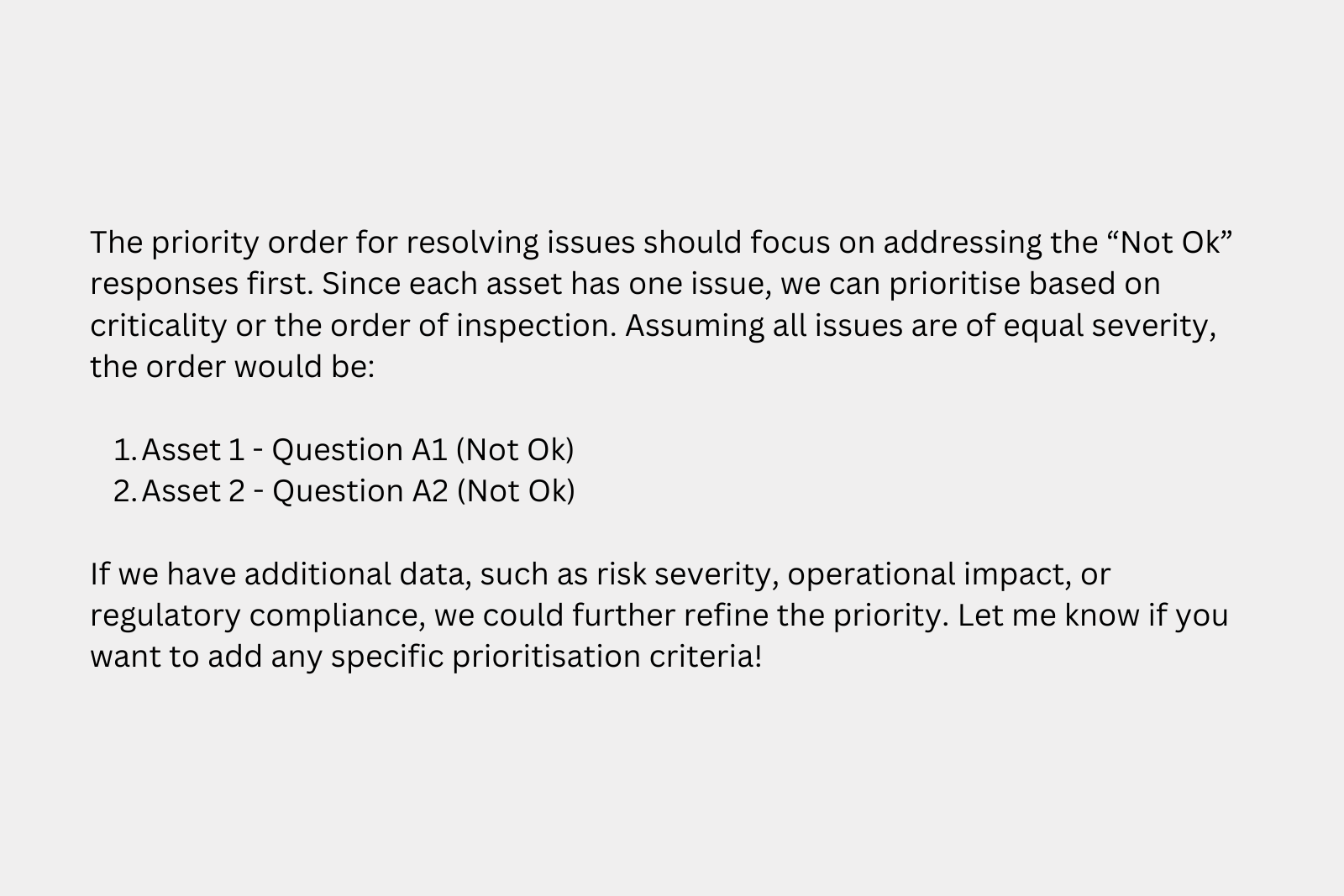

Without extra context, the AI’s response (shown in Screenshot 2) treats every “Not Ok” equally:

However, if we clarify that Question A2 is inherently more critical, we get a more valuable output (see Screenshot 3):

This simple adjustment illustrates how a properly engineered prompt — along with structured data — enables AI to highlight higher-risk findings for immediate attention. Ultimately, the synergy between standardised data capture and carefully tailored prompts underpins robust AI for inspection management. By explicitly embedding severity information or relevant engineering standards into the model’s instructions, teams can ensure the AI both identifies and prioritises urgent issues with minimal oversight — an essential capability in industrial environments where timeliness and accuracy are paramount.

AI Risks – The Dangers of Poor Data and Model Selection

AI offers enormous promise, but with great power comes great responsibility. Lack of rigor in data management or failure to align AI configurations with engineering standards can lead to costly missteps.

- Bad Data = Unreliable AI Incomplete or disorganised data prevents the AI model from accurately identifying risk indicators. This could lead to either missing a genuine red flag or generating “hallucinations” — fabrications that appear plausible but have no factual basis. For instance, if your inspection history is locked in inconsistent PDFs, the AI might glean partial truths and produce flawed advice.

- Compliance Pitfalls Compliance with safety guidelines cannot be left to guesswork. AI must be taught to align with relevant engineering standards. With Inspectivity, the no-code platform capability gives organisations the power to directly configure “prompt engineering” so that AI focuses on standard-based severity definitions and required thresholds. If not done properly, non-compliant workflows might slip by without the needed human review.

- Security Concerns Industrial inspection data can be highly sensitive — covering critical infrastructure or proprietary equipment. A data leak can have significant consequences. Secure AI solutions “silo” each client’s data, preventing any cross-contamination between separate organisations. Inspectivity ensures that your data remains accessible only to those with the right permissions. By hosting on secure cloud infrastructures (e.g., AWS) and enforcing best-practice encryption, AI-driven processes become safer to deploy.

The main takeaway: Digitising your inspection data is the first step. That data has to be maintained with consistent definitions, validated for accuracy, and integrated into an AI pipeline that enforces compliance and security at every stage.

AI Guardrails – How Inspectivity Ensures AI Safety

AI guardrails are rules, controls, and continuous monitoring measures that keep AI applications operating within ethical, legal, and operational boundaries. Think of them like safety barriers on a highway: they keep your AI solution from veering into dangerous territory.

- No Cross-Client Data Sharing Each organisation has its secure silos. Inspectivity never trains public AI models with proprietary data. That means your sensitive inspection data stays private.

- Prompt Engineering Control Inspectivity’s no-code environment enables clients to design and test their prompts without needing specialised data science expertise. AI doesn’t produce free-form judgments; it simply provides a structured response based on configured risk models, engineering guidelines, or compliance rules.

- Model Selection AI is not one-size-fits-all. Some models specialise in short answers; others excel at complex reasoning. Inspectivity’s approach focuses on enterprise-grade solutions that can handle heavy workloads, integrate with your engineering data, and deliver consistent reliability. This ensures the AI outputs remain relevant, accurate, and actionable.

- Why AI Governance Matters A key principle in advanced inspections is human-AI collaboration. AI should not supplant the trained inspector but rather augment them, speeding up triage. For highly regulated industries, human validation remains essential: an AI-driven alert still needs a final expert sign-off. By baking in these checks, Inspectivity’s guardrails reduce the risk of compliance failures, unsafe recommendations, or misguided maintenance decisions.

The Future of AI – Move Fast or Fall Behind

Generative AI is evolving at a breakneck pace, and hesitating could mean losing ground to more agile competitors.

- Adoption Is Soaring ChatGPT, for instance, reached over 300 million weekly users in just two years. “Our research finds the biggest barrier to scaling is not employees — who are ready — but leaders, who are not steering fast enough.” This underscores how quickly organisational cultures can adapt if executives champion AI adoption.

- A Once-in-a-Generation Transformation AI is reshaping industries much like the invention of the steam engine. The shift from purely human-driven tasks to “superagency”, as described in Superagency: What Could Possibly Go Right with Our AI Future by Reid Hoffman, is happening right now. AI tools are rapidly graduating from simple chatbots to advanced strategic assistants.

- Short-Term Uncertainty vs. Long-Term Gains “The long-term potential of AI is great, but the short-term returns are unclear.” Many organisations grapple with the immediate ROI question. In the context of inspection management, the payoff can arrive sooner than expected — time saved, errors prevented, improved compliance, and better resource allocation. Those who delay may be left 6-12 months behind, which quickly compounds as AI usage scales.

- Concrete Performance Gains By 2022, GPT-3.5 scored in the 70th percentile on SAT math; by 2024, GPT-4 performed in the top 10% of bar exam takers and achieved 90% accuracy on the USMLE. These leaps illustrate how AI’s reasoning capabilities are no longer limited to basic tasks but extend into complex problem-solving relevant to mission-critical operations. This evolution in AI performance will continue to spill over into industrial solutions like Inspectivity.

Act now or be left behind. AI doesn’t idle. Those who integrate AI into their inspection processes — capturing structured data, applying robust guardrails, and harnessing advanced risk analysis — are positioning themselves to dominate tomorrow’s industrial landscape.

People are using [AI] to create amazing things. If we could see what each of us can do 10 or 20 years in the future, it would astonish us today.

Sam Altman, cofounder and CEO of OpenAI.

Conclusion

Today’s industrial environments face growing pressures to optimise processes, reduce downtime, and maintain the highest safety standards. AI-powered inspection offers a transformative path: from freeing supervisors to focus on real emergencies to ensuring critical data is systematically captured, analysed, and flagged.

The example of Sarah reviewing 100 reports a day is not just a hypothetical scenario. It’s a challenge faced by inspection leaders everywhere. By digitising data, applying large language models, and structuring intelligent prompts, organisations stand to revolutionise their inspection processes. This is precisely what Inspectivity sets out to do: integrate robust AI analysis with secure, user-friendly workflows that adhere to engineering standards and compliance requirements.

Yes, there are risks — from poor data management to security pitfalls to AI hallucinations. But with explainability and guardrails in place, AI can become an invaluable partner. The pace of AI’s evolution, combined with real-world success stories, signals that organisations must adapt quickly or risk irrelevance. As generative models become increasingly integrated into day-to-day operations, the prime differentiator will be how effectively leaders manage the transition.

For those willing to take decisive steps now, AI can unlock unprecedented levels of productivity, risk reduction, and strategic insight. In the realm of inspection management, that can mean the difference between routine inefficiency and a streamlined workflow with fewer surprises, less downtime, and a sharper competitive edge.